Continuing the OpenStack + NSX series (Part 1, Part 2 and Part 3) on deploying a multi-tenant OpenStack environment that relies upon NSX, this post will cover the details of the deployment and configuration.

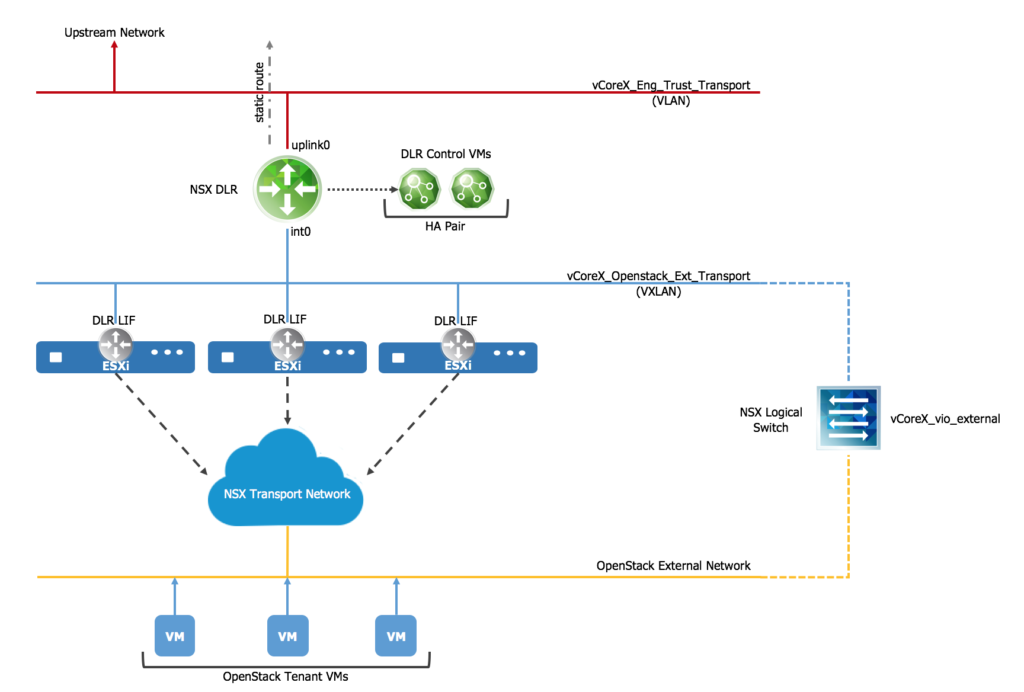

There have been a couple options discussed through the series, including the logical graphic with that relies on a NSX DLR w/o ECMP Edges:

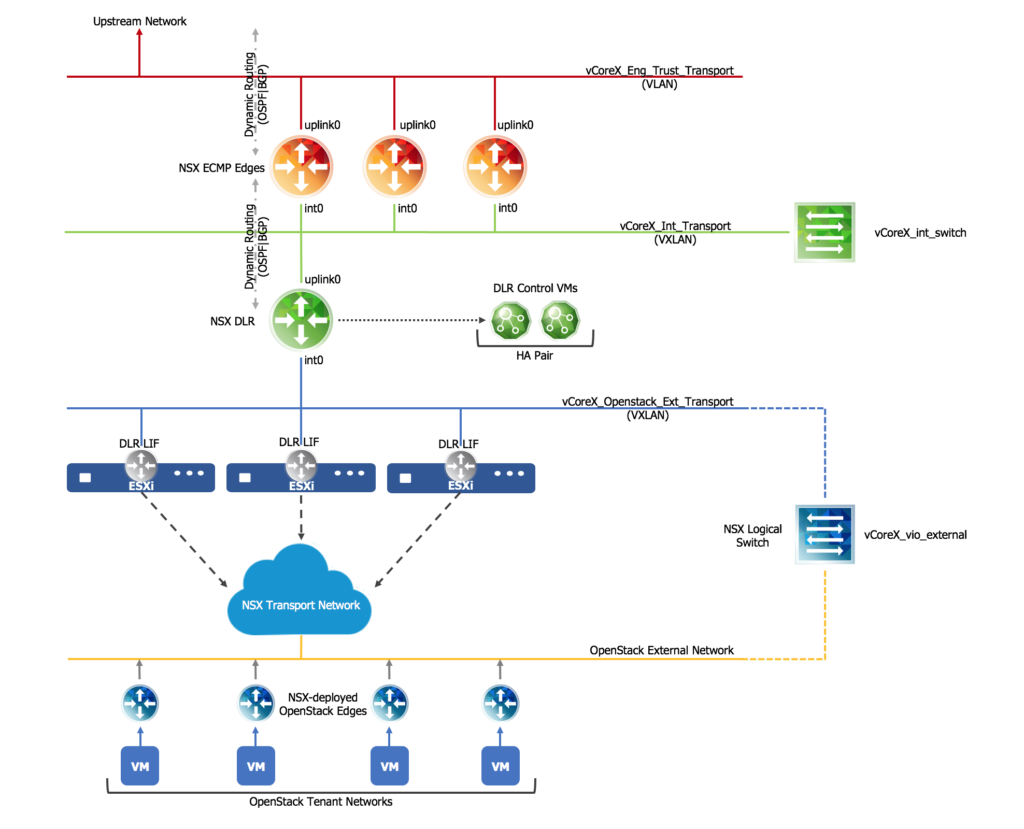

Or a logical virtual network design with a DLR and ECMP Edges:

Regardless of which virtual network design you choose, the configuration of the NSX Distributed Logical Router and the tie into OpenStack will need to be configured. In the course of building out a few VMware Integrated OpenStack labs, proof-of-concepts and pilot environments, I’ve learned a few things.

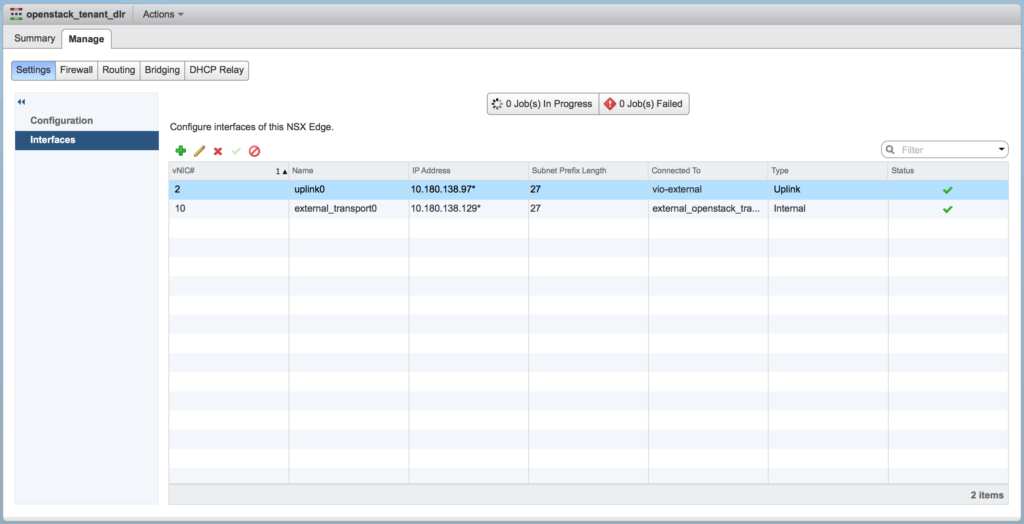

Rather than go through all 30+ steps to implement the entire stack, I want to simply highlight a few keys points. When you configure the DLR, you should end up with two interfaces — an uplink to either the ECMP layer or the physical VLAN and an internal interface to the OpenStack external VXLAN network.

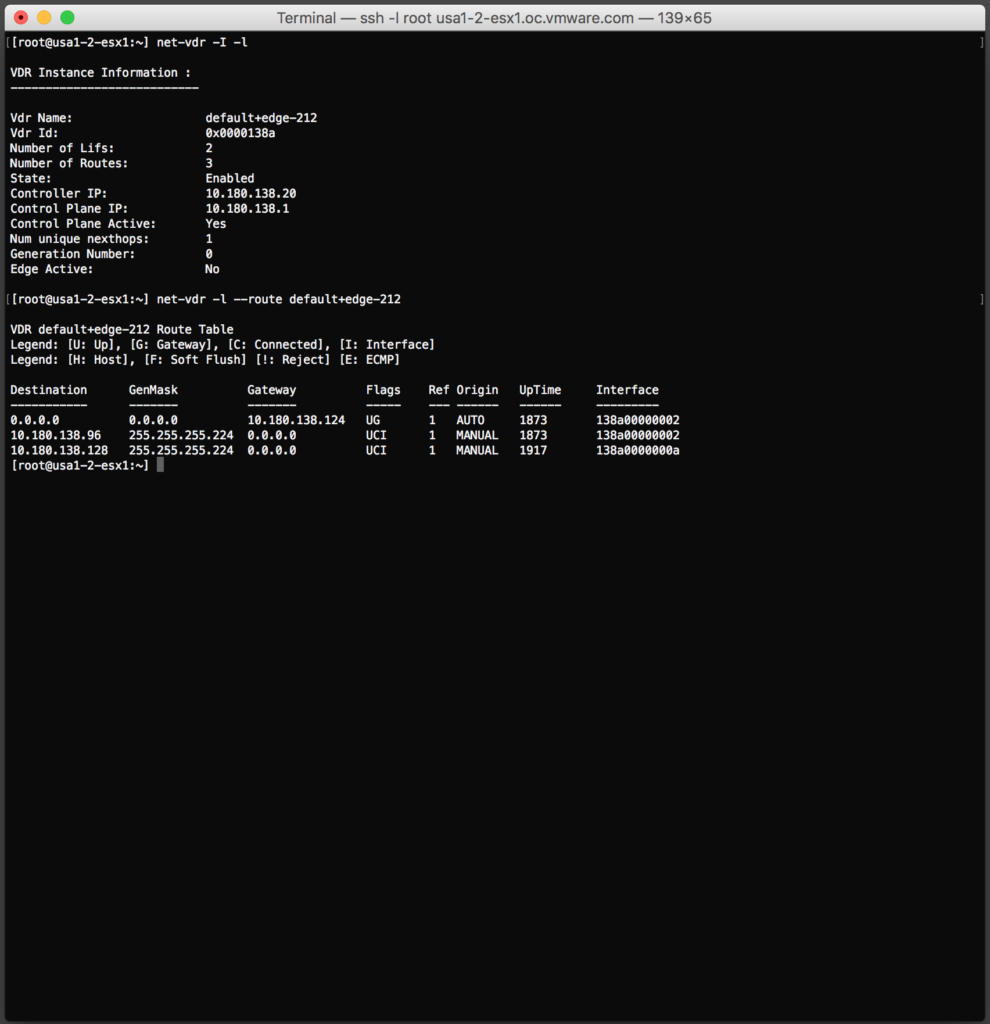

Once the DLR is deployed, you can log into any of the ESXi hosts within the NSX transport zone and verify the routes are properly in place with a few simple CLI commands.

The implementation of tying the NSX components into OpenStack is now ready to be completed. I prefer to use the API method, using the neutron CLI — log into the VIO management server and then either of the Controller VMs.

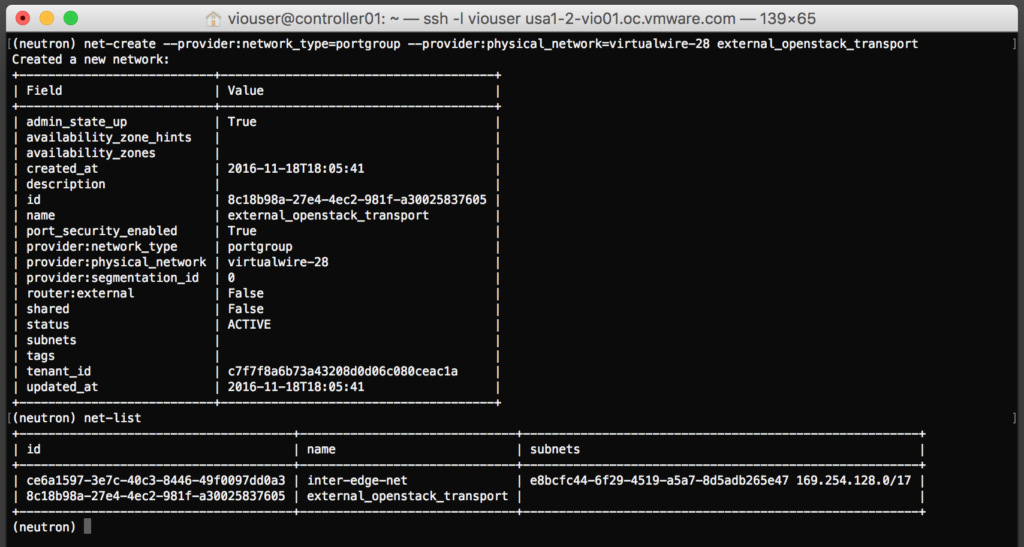

Key points to remember here:

- The physical_network parameter is the just the virtualwire-XX string from the NSX-created portgroup.

- The name for the network must exactly match the NSX Logical Switch that was created for the OpenStack external network.

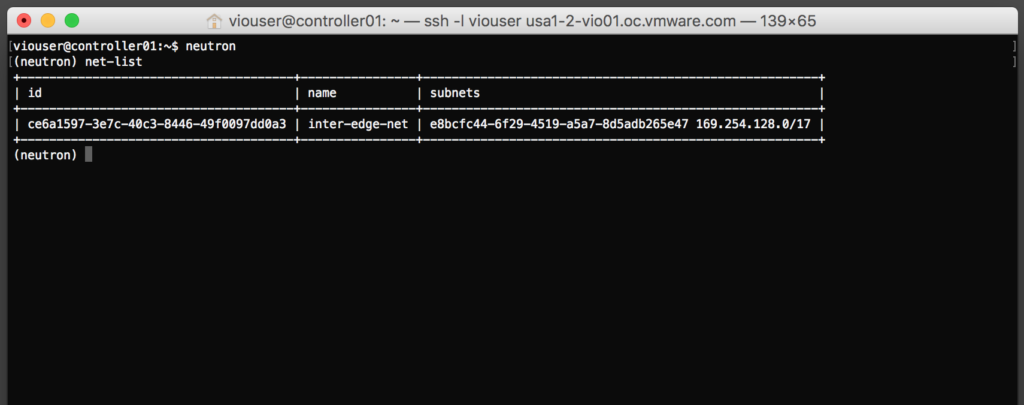

The commands I used here to create the network inside OpenStack:

$ source <cloudadmin_v3> $ neutron net-list $ neutron net-create --provider:network_type=portgroup --provide:physical_network=virtualwire-XX nsx_logical_switch_name $ neutron net-list

All that remains is adding a subnet to the external network inside OpenStack, which can be performed through the Neutron CLI or the Horizon UI. All-in-all it is a pretty easy implementation, just make sure you remember to reference the proper the object names in NSX when creating the OpenStack network objects.

Enjoy!