The idea for this post has been on the backlog for a really long time. I recently spent a significant amount of time reviewing VMware SIOC — or Storage I/O Control — and became intimately more familiar with its inner-workings. First off, I do not think very much of this information will be new to those who have used, or are using SIOC in a production VMware environment. However, when looking for information on SIOC, I was not able to find a single all-inclusive resource. I am hoping this post will help others understand how the inner-workings of SIOC operate and how to determine if your use-case could be met by enabling SIOC.

Storage I/O Control

VMware first introduced Storage I/O Control, or SIOC, back in vSphere v4.1 and has steadily improved it with nearly every release of vSphere from that time. The latest version of SIOC in vSphere 6.0 includes several enhancements that are helping the adoption rate within enterprise organizations. Storage I/O Control is essentially a disk scheduler that monitors the datastores it is enabled on to determine if there is resource contention occurring. When it detects resource contention, SIOC is able to isolate which VMDK (and therefore VM object) that is causing the contention and take action. This becomes challenging where the datastore is a shared resource across multiple ESXi hosts, clusters or vCenters.

SIOC supports Fibre Channel, ISCSI and NFS datastores. RDM devices are not supported.

In order to use SIOC several prerequisites have to be met:

- Datastore needs to be isolated to a single vCenter domain.

- Single extent datastores only.

- Non-shared spindles for underlying storage array isolated to a single vCenter domain.

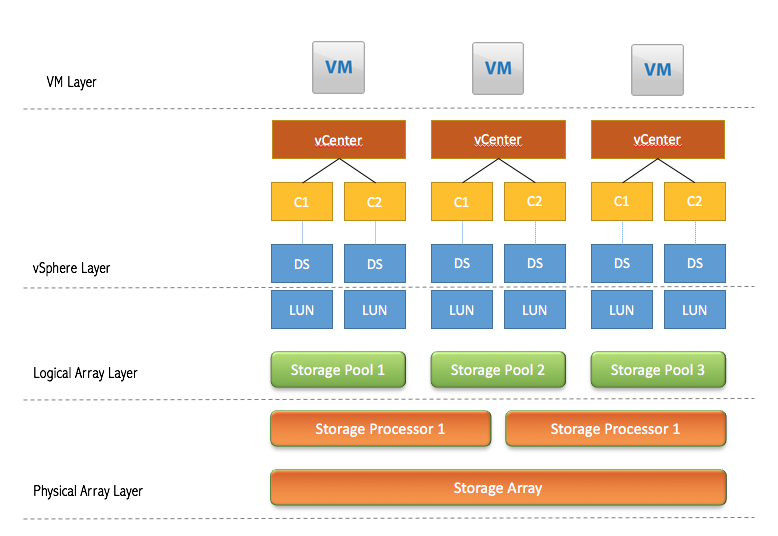

A SIOC friendly logical design for the storage layer looks like the following:

The diagram illustrates how a storage array (iSCSI or Fibre Channel) would carve out the storage pools (disk groups) and present LUNs to the vSphere layer that are then mapped to a VMFS datastore. Notice there are no LUNs or datastores being shared across a vCenter domain.

SIOC creates a metadata file on each datastore it is enabled on and that metadata file is used when resource contention occurs to help SIOC identify which VMDK is the culprit. After it identifies the culprit, SIOC will begin limiting the amount of I/O operations can be issued to that datastore. That metadata file is only visible within the SIOC domain it was created on — meaning it can only be seen by the vCenter domain it was created on.

Congestion Thresholds

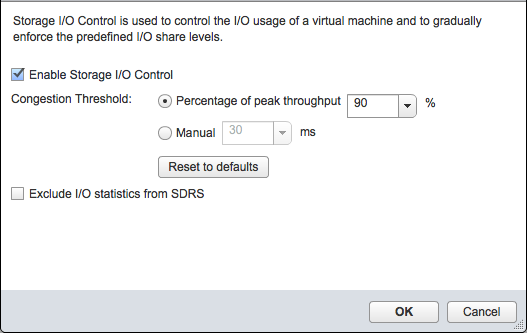

When SIOC is enabled on a datastore, the vSphere Administrator is given two options to choose from for threshold monitoring — peak throughput or response time.

The two defaults are 90% for peak throughput or 30 milliseconds for response times. It is critical you understand the workload present on the datastore so that these values are set properly. If the threshold values are improperly set, the performance of the virtual machines can be affected in a negative manner. The storage layer SLA requirements of the environment and the physical storage array capabilities will be contributing factors here to how you design the SIOC threshold values.

The peak throughput percentage is calculated by vCenter based on the storage array capabilities. There is a table that has been published with suggestions on what the responses times should be based on the underlying disk types, however it is several years old and your milage may vary. I will forego posting it here, however I will note that the baseline of 30 milliseconds may be too high for some modern storage arrays. For example, the environment I work in now is targeting 15-20 milliseconds as the threshold for response times based on the hardware of the storage arrays and workloads placed on them. Again, understanding your workload is key!

Resource Shares & Limits

If the datastore is not using SIOC, the device resources are divided evenly based on the number of VM objects on the datastore. However, it becomes a first-come, first-served environment. When all of the VM objects are behaving nicely, then everyone gets the same amount of resources allocated to it. However, when one VM object starts to misbehave, there is nothing in place to prevent that VM object from consuming more of his “fair share” of resources. This is commonly referred to as the “noisy neighbor” issue.

When SIOC is enabled on a datastore, based on the resource shares and limits configured, it will prevent the noisy neighbor issue from occurring (theoretically). That does not mean that a single VM could not consume additional resources above his allocated share — it just means that if resource contention is occurring, SIOC will begin to balance the resources equally to all of its VM objects based on their resource shares and limits. Remember, the goal in a storage layer is not to prevent VM objects from having the resources they need — it should be designed to allow them to have what they need without adversely affecting everyone else.

Resource Shares

These work in a similar fashion as resource shares on vCenter Resource Pools. The resource share value is taken, then it is divided by the number of VM objects within that assigned group and distributes the resources evenly across each object. Specifically with SIOC, the number of shares assigned to all the VM objects, within a given ESXi host, will be totaled and then divided by the number of objects.

Limits

Beyond resource shares for storage, limits provide a hard upper-bound for storage IO traffic on a virtual machine. The key difference between limits and resources shares is that a limit is enforced on a virtual machine even if the storage array is not currently under contention. Resource shares are only enforced when IO contention is occurring. The default for SIOC limits is for each virtual machine to be unlimited. I suggest being very careful when applying a limit on a virtual machine within an environment.

Summary

I think the methodology used by SIOC works really well with shared storage arrays and is one of the key features in vSphere that should be used whenever possible inside a private cloud. One important thing to note is that SIOC does not work with Virtual SAN. Tomorrow’s post will talk about the methodology Virtual SAN uses and talk about the advantages SIOC has over the Virtual SAN IO limiting.