I have been working on an OpenStack architecture design using VMware Integrated OpenStack (VIO) for the past several months. The design itself is being developed for an internal cloud service offer and is the design for my VCDX certification pursuit in 2017. As the design has gone through the Proof-of-Concept and later the Pilot phases, determining how to offer a multi-tenant/personal cloud offering has presented itself to be challenging. The design relies heavily on the NSX software defined networking (SDN) platform, both because of requirements from VIO and service offering requirements. As such, all north-south traffic goes through a set of NSX Edge devices. Prior to hitting the north-south boundary however, the east-west tenant traffic needed to be addressed.

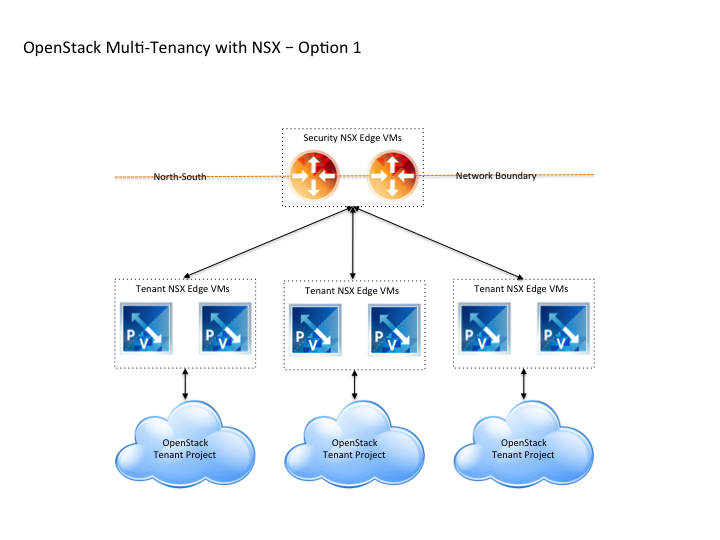

I had seen other designs where a single, large (/22 or greater) external network was relied upon by all OpenStack tenants talked about. However, for a personal cloud or multi-tenant cloud offering based on OpenStack, I felt this was not a good design choice for this environment. I wanted to have a higher-level of separation between tenants and one option involved a secondary pair of NSX Edge devices for each tenant. The following diagram describes the logical approach.

The above logical representation expresses how the deployed tenant NSX Edges are connected upstream to the security or L3 network boundary and downstream to the OpenStack Project that is created for each tenant. At a small to medium scale, I believe this model works operationally — the tenant NSX Edges create a logical separation between each OpenStack tenant and (if assigned to on a per-team basis) should remain relatively manageable as the environment scales to dozens of tenants.

The NSX Edge devices are deployed inside the very same OpenStack Edge cluster specified during the VIO deployment, however they are being managed outside of OpenStack and the tenant has no control over them. Each secondary pair of NSX Edge devices for the tenant are configured with two interfaces — one uplink to the north-south NSX Edge security gateway and a single interface that becomes the external network inside OpenStack. It is this internal interface that the OpenStack tenant deploys all of their own micro-segmented networks and consumes floating IP addresses from.

An upcoming post will describe the deployment and configuration of these tenant NSX Edge devices and their corresponding configuration inside OpenStack.

I am concerned with this approach once the scale of the environment grows to 50+ OpenStack tenants. It is at that point where operational management difficulties will begin to surface and cause challenges. Specifically, the lack of available automation for creating, linking and configuring both the NSX component deployment and tenant creation within OpenStack itself (projects, users, role-based access, external networks, subnets, etc).

Update

After writing the initial article in the post, further testing was performed that has influenced how multi-tenant OpenStack external networks can be implemented. As such, Part 2 of the series includes the new information. For completeness, I’ve decided to include that information here as well for archival purposes.

Part 2

The post yesterday discussed a method for having segmented multi-tenant networks inside of OpenStack. As a series of test cases were worked through with a setup of this nature, a large gaping hole in OpenStack came into view.

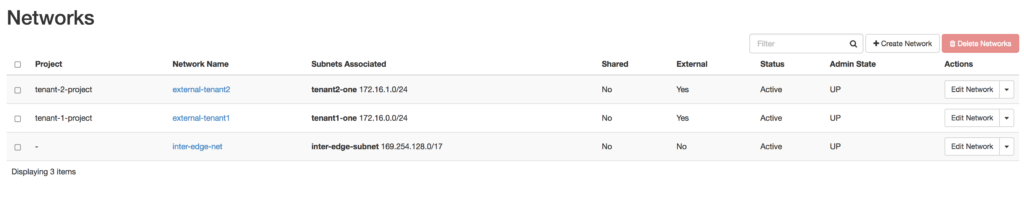

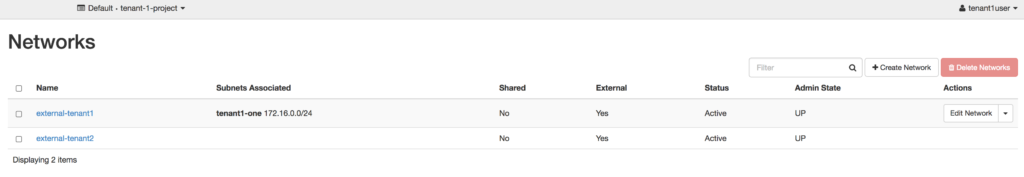

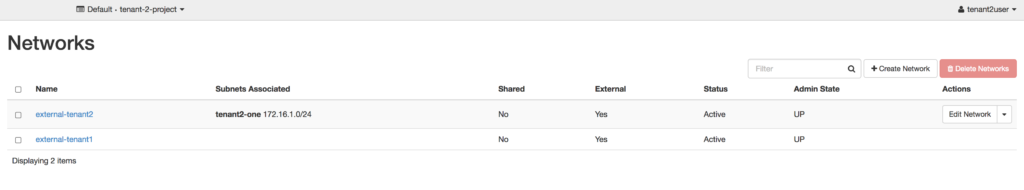

What does the previously described multiple external networks look like inside OpenStack?

In the second and third screenshots, you can see the two tenants see both external networks, but they only see a subnet listed for the external network that was created with their respective tenant-id. At first glance, this would seem to be doing what was intended — each tenant receiving their own external network to consume floating IP addresses from. Unfortunately, it begins to breakdown when a tenant goes to Compute –> Access & Security –> Floating IPs in Horizon.

The above screenshot shows a tenant being assigned an floating IP address from what should have been an external network they did not have access to.

I felt pretty much like Captain Picard after working through the test cases. Surely, OpenStack would allow a design where tenants have segmented external networks — right?

Unfortunately, OpenStack does not honor this type of segmented external networking design — it will allow any tenant consume/claim a floating IP address from any of the other external networks. To read how OpenStack fully implements external networks, you can read the documentation here. At issue here is highlighted here,

Nevertheless, the concept of ‘external’ implies some forms of sharing, and this has some bearing on the topologies that can be achieved. For instance it is not possible at the moment to have an external network which is reserved to a specific tenant.

Essentially, OpenStack Neutron thinks of external networks differently, than I believe most architects. It also does not clearly honor the tenant-id attribute that is specified when the network is created, nor when the shared attribute is not enabled on the external network. The methodology OpenStack Neutron uses is more in-line with the AWS consumption model — everyone drinks from the same pool and there is no segmentation between the tenants. I personally do not believe that model works in a private cloud where there are multiple tenants.

The next post in the series will discuss a potential design for working around the issue inside OpenStack Neutron.