As the project moves into the next phase, Ansible is beginning to be relied upon for the deployment of the individual components that will define the environment. This installment of the series is going to cover the use of Ansible with VMware NSX. VMware has provided a set of Ansible modules for integrating with NSX on GitHub. The modules easily allow the creation of NSX Logical Switches, NSX Distributed Logical Routers, NSX Edge Services Gateways (ESG) and many other components.

The GitHub repository can be found here.

Step 1: Installing Ansible NSX Modules

In order to support the Ansible NSX modules, it was necessary to install several supporting packages on the Ubuntu Ansible Control Server (ACS).

$ sudo apt-get install python-dev libxml2 libxml2-dev libxslt1-dev zlib1g-dev npm $ sudo pip install nsxramlclient $ sudo npm install -g https://github.com/yfauser/raml2html $ sudo npm install -g https://github.com/yfauser/raml2postman $ sudo npm install -g raml-fleece

In addition to the Ansible NSX modules, the ACS server will also require the vSphere for NSX RAML repository. The RAML specification includes information on the NSX for vSphere API. The repo will need to be cloned to a local directory on the ACS as well before execution of an Ansible Playbook will work.

Now that all of the prerequisites are met, the Ansible playbook for creating the NSX components can be written.

Step 2: Ansible Playbook for NSX

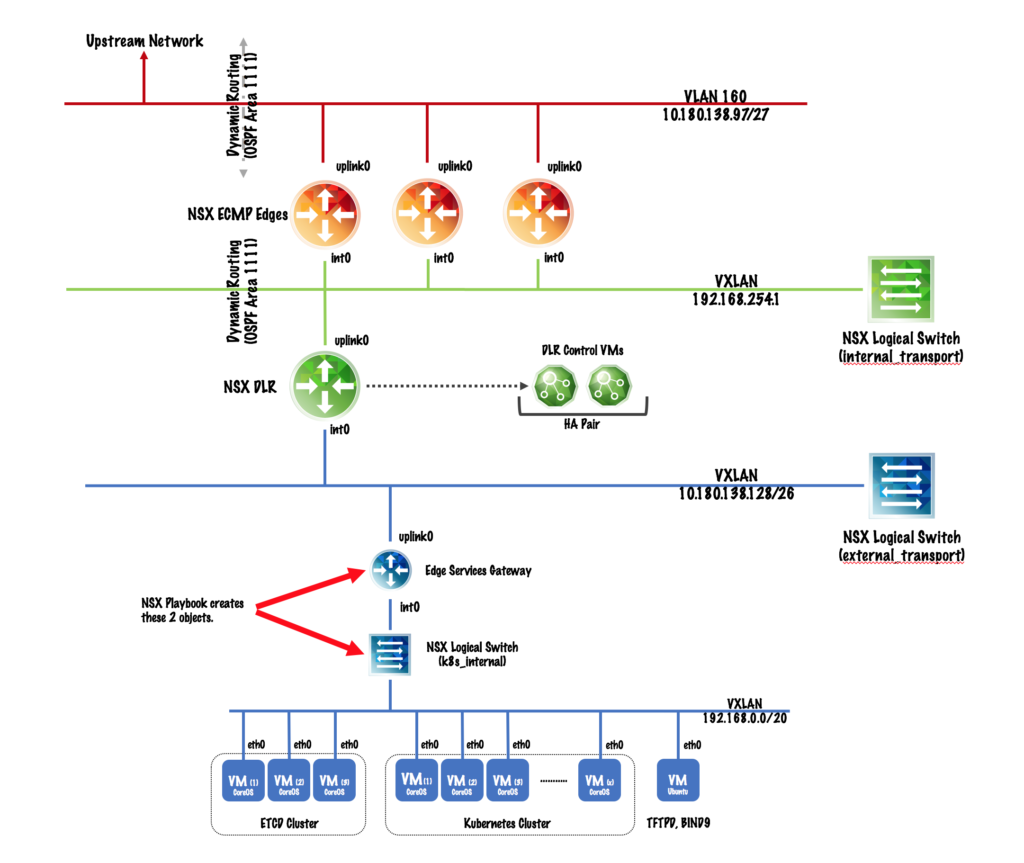

The first thing to know is the GitHub repo for the NSX modules include many great examples within the test_*.yml files which were leveraged to create the playbook below. To understand what the Ansible Playbook has been written to create, let’s first review the logical network design for the Infrastructure-as-Code project.

The design calls for three layers of NSX virtual networking to exist — the NSX ECMP Edges, the Distributed Logical Router (DLR) and the Edge Services Gateway (ESG) for the tenant. The Ansible Playbook below assumes the ECMP Edges and DLR already exist. The playbook will focus on creating the HA Edge for the tenant and configuring the component services (SNAT/DNAT, DHCP, routing).

The GitHub repository for the NSX Ansible modules provides many great code examples. The playbook that I’ve written to create the k8s_internal logical switch and the NSX HA Edge (aka ESG) took much of the content provided and collapsed it into a single playbook. The NSX playbook I’ve written can be found in the Virtual Elephant GitHub repository for the Infrastructure-as-Code project.

As I’ve stated, this project is mostly about providing me a detailed game plan for learning several new (to me) technologies, including Ansible. The NSX playbook is the first time I’ve used an answer file to obfuscate several of the sensitive variables needed specifically for my environment. The nsxanswer.yml file includes the variable required for connecting to the NSX Manager, which is the component Ansible will be communicating with to create the logical switch and ESG.

Ansible Answer File: nsxanswer.yml (link)

1 nsxmanager_spec: 2 raml_file: '/HOMEDIR/nsxraml/nsxvapi.raml' 3 host: 'usa1-2-nsxv' 4 user: 'admin' 5 password: 'PASSWORD'

The nsxvapi.raml file is the API specification file that we cloned in step 1 from the GitHub repository. The path should be modified for your local environment, as should the password: variable line for the NSX Manager.

Ansible Playbook: nsx.yml (link)

1 --- 2 - hosts: localhost 3 connection: local 4 gather_facts: False 5 vars_files: 6 - nsxanswer.yml 7 vars_prompt: 8 - name: "vcenter_pass" 9 prompt: "Enter vCenter password" 10 private: yes 11 vars: 12 vcenter: "usa1-2-vcenter" 13 datacenter: "Lab-Datacenter" 14 datastore: "vsanDatastore" 15 cluster: "Cluster01" 16 vcenter_user: "[email protected]" 17 switch_name: "{{ switch }}" 18 uplink_pg: "{{ uplink }}" 19 ext_ip: "{{ vip }}" 20 tz: "tzone" 21 22 tasks: 23 - name: NSX Logical Switch creation 24 nsx_logical_switch: 25 nsxmanager_spec: "{{ nsxmanager_spec }}" 26 state: present 27 transportzone: "{{ tz }}" 28 name: "{{ switch_name }}" 29 controlplanemode: "UNICAST_MODE" 30 description: "Kubernetes Infra-as-Code Tenant Logical Switch" 31 register: create_logical_switch 32 33 - name: Gather MOID for datastore for ESG creation 34 vcenter_gather_moids: 35 hostname: "{{ vcenter }}" 36 username: "{{ vcenter_user }}" 37 password: "{{ vcenter_pass }}" 38 datacenter_name: "{{ datacenter }}" 39 datastore_name: "{{ datastore }}" 40 validate_certs: False 41 register: gather_moids_ds 42 tags: esg_create 43 44 - name: Gather MOID for cluster for ESG creation 45 vcenter_gather_moids: 46 hostname: "{{ vcenter }}" 47 username: "{{ vcenter_user }}" 48 password: "{{ vcenter_pass }}" 49 datacenter_name: "{{ datacenter }}" 50 cluster_name: "{{ cluster }}" 51 validate_certs: False 52 register: gather_moids_cl 53 tags: esg_create 54 55 - name: Gather MOID for uplink 56 vcenter_gather_moids: 57 hostname: "{{ vcenter }}" 58 username: "{{ vcenter_user}}" 59 password: "{{ vcenter_pass}}" 60 datacenter_name: "{{ datacenter }}" 61 portgroup_name: "{{ uplink_pg }}" 62 validate_certs: False 63 register: gather_moids_upl_pg 64 tags: esg_create 65 66 - name: NSX Edge creation 67 nsx_edge_router: 68 nsxmanager_spec: "{{ nsxmanager_spec }}" 69 state: present 70 name: "{{ switch_name }}-edge" 71 description: "Kubernetes Infra-as-Code Tenant Edge" 72 resourcepool_moid: "{{ gather_moids_cl.object_id }}" 73 datastore_moid: "{{ gather_moids_ds.object_id }}" 74 datacenter_moid: "{{ gather_moids_cl.datacenter_moid }}" 75 interfaces: 76 vnic0: {ip: "{{ ext_ip }}", prefix_len: 26, portgroup_id: "{{ gather_moids_upl_pg.object_id }}", name: 'uplink0', iftype: 'uplink', fence_param: 'ethernet0.filter1.param1=1'} 77 vnic1: {ip: '192.168.0.1', prefix_len: 20, portgroup_id: "{{ switch_name }}", name: 'int0', iftype: 'internal', fence_param: 'ethernet0.filter1.param1=1'} 78 default_gateway: "{{ gateway }}" 79 remote_access: 'true' 80 username: 'admin' 81 password: "{{ nsx_admin_pass }}" 82 firewall: 'false' 83 ha_enabled: 'true' 84 register: create_esg 85 tags: esg_create

The playbook expects to be provided three extra variables from the CLI when it is executed — switch, uplink and vip. The switch variable defines the name of the logical switch, the uplink variable defines the uplink VXLAN portgroup the tenant ESG will connect to, and the vip variable is the external VIP to be assigned from the network block. At the time of this writing, these sorts of variables continue to be command-line based, but will likely be moved to a single Ansible answer file as the project matures. Having a single answer file for the entire set of playbooks should simplify the adoption of the Infrastructure-as-Code project into other vSphere environments.

Now that Ansible playbooks exist for creating the NSX components and the VMs for the Kubernetes cluster, the next step will be to begin configuring the software within CoreOS to run Kubernetes.

Stay tuned.